Kubernetes Operators: The New Backbone of Automated Machine Learning Pipelines

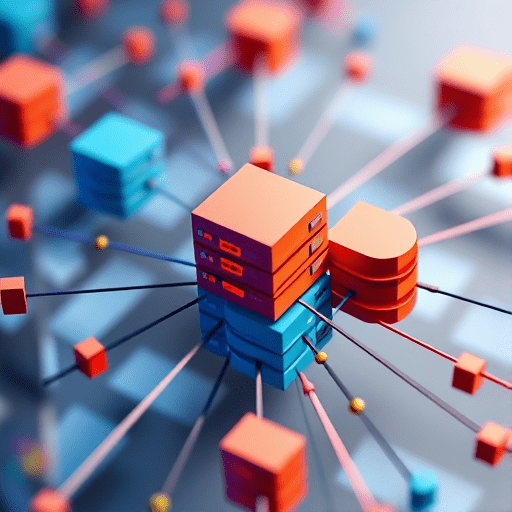

In today’s data‑driven world, the speed and reliability of end‑to‑end machine learning (ML) workflows can determine a company’s competitive edge. Kubernetes Operators have emerged as the go‑to solution for orchestrating complex ML pipelines, providing declarative management of training jobs, versioning, and dynamic scaling. By embedding domain expertise into reusable controllers, Operators turn raw Kubernetes clusters into smart, self‑healing ML platforms that can run at scale in the cloud.

1. What Are Kubernetes Operators?

An Operator is a Kubernetes extension that codifies the operational knowledge of an application or service. It watches Custom Resource Definitions (CRDs) and reacts to changes by invoking the necessary Kubernetes primitives—pods, services, volumes—to maintain the desired state. Operators differ from plain Helm charts in that they continuously reconcile the system, handle upgrades, back‑ups, and can perform sophisticated tasks like rolling updates or rollback.

Key Components of an Operator

- Custom Resource Definition (CRD): The schema that represents the application’s desired state.

- Controller: The reconciliation loop that watches for CRD changes and applies necessary actions.

- Reconciliation Logic: Business rules that transform a CRD into concrete Kubernetes objects.

- Event Handlers: Hooks for monitoring, logging, and alerting.

2. Operators in the ML Context

ML pipelines involve numerous moving parts: data ingestion, feature extraction, model training, hyper‑parameter tuning, evaluation, deployment, and monitoring. Managing these components manually leads to brittle workflows and hidden configuration drift. Operators encapsulate each stage as a CRD, enabling data scientists to declare what they want rather than how to build it.

Benefits for ML Teams

- Reduced operational overhead: One declarative file per pipeline step.

- Consistency: Enforced best practices across all experiments.

- Reproducibility: Immutable CRDs capture every hyper‑parameter and dataset version.

- Scalability: Operators can auto‑scale training jobs based on GPU or CPU demand.

3. Building an End‑to‑End Pipeline with Operators

Consider a typical “train‑then‑deploy” workflow:

- Data Ingestion Operator pulls raw data from S3, validates schema, and stores it in a versioned dataset bucket.

- Feature Store Operator materializes features, caches them in Redis, and exposes an API for downstream jobs.

- Training Operator launches a Spark or TensorFlow job in a Kubernetes job, passing in dataset URI, hyper‑parameters, and GPU request.

- Model Registry Operator records the resulting model artifacts in a registry (e.g., MLflow), tags the model with experiment ID, and runs evaluation metrics.

- Deployment Operator deploys the model to a Knative endpoint or a GPU‑enabled Inference Service, automatically rolling out the latest stable version.

Each operator exposes a lightweight CRD; a data scientist writes a single YAML file that stitches them together via dependencies. The reconciliation loop automatically triggers downstream operators once the preceding step reports success.

4. Versioning and Model Registry as Operators

Version control is paramount in ML. Operators can enforce immutable artifact management by integrating with GitOps workflows:

- Data Version Operator tags raw datasets and pushes metadata to a Git repository.

- Experiment Operator stores hyper‑parameter configurations and seed values in a Git branch, ensuring every run is reproducible.

- Model Registry Operator leverages MLflow or DVC to record model signatures, metrics, and provenance.

Because CRDs are stored in etcd, they benefit from Kubernetes’ built‑in etcd snapshots and backup mechanisms. This guarantees that a pipeline’s entire configuration can be rolled back to a previous state if a new training iteration introduces regressions.

5. Auto‑Scaling Strategies with Operators

Auto‑scaling is essential for cost‑efficient model training. Operators can orchestrate scaling in several ways:

5.1 Pod Autoscaling for GPU‑Intensive Jobs

- Integrate

HorizontalPodAutoscalerwith custom metrics such asGPUUtilization. - Operators can dynamically adjust the number of replicas for distributed training frameworks (e.g., Horovod).

5.2 Job Queue Scaling

- Implement a queue manager CRD that keeps a backlog of pending training jobs.

- Use a

Cluster Autoscalerto spin up nodes when the queue length exceeds a threshold.

5.3 Multi‑Cluster Federation

- Distribute training across multiple cloud regions by replicating the Operator’s CRDs to federated clusters.

- Operators can detect regional latency and move data locality accordingly.

6. Reliability Patterns for ML Operators

Operators can enforce reliability guarantees through:

- Retry Logic: Automatic retries with exponential back‑off for transient failures.

- Dead‑Letter Queues: Persist failures in a dedicated queue for later inspection.

- Health Checks: Operators expose liveness and readiness probes that verify downstream dependencies (e.g., GPU driver, disk space).

- Observability Hooks: Emit logs to Loki, metrics to Prometheus, and traces to Jaeger for comprehensive monitoring.

7. Deployment Considerations

When adopting Operators for ML pipelines, teams should address:

7.1 Security

- Use

PodSecurityPoliciesorOPA Gatekeeperto restrict container privileges. - Encrypt secrets with

Kubernetes Secretsor external vaults (e.g., HashiCorp Vault).

7.2 CI/CD Integration

- Treat operator CRDs as code and store them in a Git repository.

- Use ArgoCD or Flux for continuous delivery of operator updates.

7.3 Resource Management

- Define resource quotas for namespaces to prevent runaway training jobs.

- Employ

LimitRangesto enforce minimum and maximum CPU/GPU limits.

8. Future Trends: AI‑Native Operators

With the rise of AI‑native infrastructure, Operators are evolving to incorporate AI capabilities directly into the control loop:

- Self‑Optimizing Operators that use reinforcement learning to adjust hyper‑parameters during training.

- Operators that auto‑detect data drift and trigger re‑training cycles without human intervention.

- Integration with serverless frameworks (Knative, OpenFaaS) to spin up inference nodes on-demand.

These advancements will further blur the line between operations and data science, making Operators a cornerstone of future ML platforms.

Conclusion

By encapsulating ML lifecycle logic into declarative, Kubernetes‑native Operators, organizations can dramatically reduce toil, enforce reproducibility, and scale training workloads efficiently. Operators transform complex pipelines into manageable, versioned, and self‑healing systems that adapt to the cloud’s dynamic nature.

Start building your own Kubernetes Operator for ML today!