Modern microservice architectures demand continuous delivery cycles that deliver new features without disrupting users. The Zero‑Downtime Blue‑Green Deployment strategy paired with Parallel Regression Tests has emerged as the gold standard for 2026 release pipelines. By running tests in parallel against both the current (green) and new (blue) environments while asserting health‑check endpoints, teams can guarantee that every deployment is validated before traffic is switched. This article walks through the technical architecture, tooling, and best practices that make this approach reliable, scalable, and efficient.

Why Zero‑Downtime Matters in 2026

As SaaS offerings expand, even a few seconds of latency can cascade into revenue loss and reputational damage. In 2026, real‑time analytics, AI‑driven services, and multi‑tenant architectures make downtime not just a service issue but a competitive differentiator. Zero‑Downtime Blue‑Green Deployments solve this by:

- Preserving user experience: Traffic is routed only to a fully tested, healthy environment.

- Enabling instant rollback: If the new image fails health checks, traffic reverts to the green cluster with no user impact.

- Providing deterministic rollouts: Deployment progress is tracked through observable health metrics rather than blind confidence.

Parallel Regression Testing: The Backbone of Confidence

Regression tests historically ran sequentially, creating bottlenecks that forced teams to accept higher risk or longer pipelines. Parallel regression testing flips that paradigm, distributing test suites across a Kubernetes cluster or cloud‑native testing framework. Key benefits include:

- Reduced test duration: Test execution time can drop from 45 minutes to 10 minutes on a 50‑node grid.

- Higher coverage: Test cases can be split across shards, allowing more edge scenarios to run within the same window.

- Isolation: Failures in one shard don’t cascade, preserving pipeline stability.

Parallelism, however, introduces its own challenges—state management, test flakiness, and resource contention. The following section explains how to structure your regression suite for parallel execution.

Designing Sharded Test Suites

Effective sharding requires that tests be independent and deterministic. Use the following guidelines:

- Data isolation: Spin up a dedicated database instance or use schema‑level isolation for each test shard.

- Stateless services: Where possible, refactor tests to avoid shared state; utilize in‑memory mocks.

- Test ordering: Randomize test order to surface hidden dependencies early.

Tools like TestContainers and Kind can spin up per‑shard containers, ensuring consistent environments across parallel runs.

Health‑Check Assertions in CI

Health checks are the safety net that catches subtle regressions missed by functional tests. Typical health‑check endpoints include:

- Service liveness:

/health/liveconfirms the process is running. - Readiness:

/health/readyverifies dependencies are up. - Metrics endpoints:

/metricsexposes Prometheus metrics for anomaly detection.

In a CI pipeline, these assertions should be executed after the parallel regression tests but before traffic switch. Use a lightweight script that polls the endpoints, verifies expected response codes, and checks critical metrics thresholds (e.g., response latency < 200ms).

CI Pipeline Architecture for Zero‑Downtime Deployments

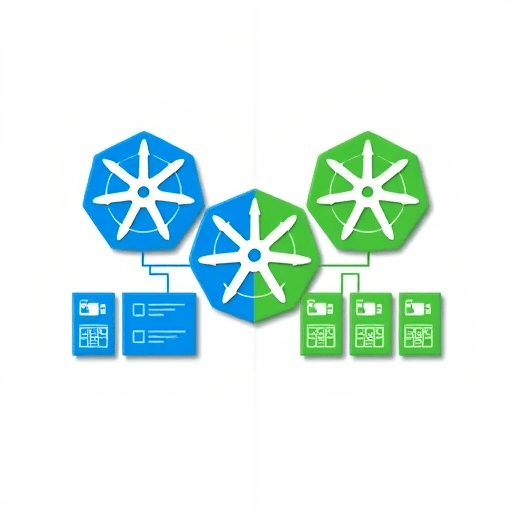

Below is a high‑level pipeline that integrates parallel regression tests and health‑check assertions. The architecture is intentionally modular to allow teams to plug in their preferred tools.

Stage 1: Build & Artifact Store

- Checkout code from Git.

- Build container image (Docker, OCI).

- Tag image with

git commit SHAand push to registry. - Upload build artifacts (e.g., Helm charts, Terraform modules) to artifact store.

Stage 2: Blue Cluster Deployment

- Spin up a blue Kubernetes namespace or cluster using the new image.

- Run health‑check assertions against the blue environment.

- If any assertion fails, abort the pipeline and notify the ops team.

Stage 3: Parallel Regression Tests

- Provision a dedicated test cluster or use cloud services (e.g., GitHub Actions matrix, CircleCI parallelism).

- Distribute the regression suite across shards.

- Execute tests against both blue and green services concurrently, capturing logs and artifacts.

- Aggregate test results; if failures occur, automatically mark the pipeline as failed and roll back the blue deployment.

Stage 4: Traffic Switch

- Once all health checks and regression tests pass, update the load balancer or DNS to route traffic to the blue cluster.

- Monitor traffic metrics for any anomalies.

- If issues arise, switch back to green within minutes.

Tooling Choices for 2026

Choosing the right tools can dramatically simplify the deployment pipeline. Below is a curated list of open‑source and commercial solutions that align with the Zero‑Downtime Blue‑Green Deployment model.

Container Orchestration

- Kubernetes 1.28+ – Native support for multiple namespaces and rolling updates.

- OpenShift – Enterprise‑grade platform with built‑in blue‑green deployment support.

- Rancher – Simplifies multi‑cluster management and provides promotion pipelines.

CI/CD Platforms

- GitHub Actions – Native matrix support for parallel test execution.

- GitLab CI – Built‑in environment variable handling and parallel jobs.

- Argo CD – GitOps tool that automatically deploys Helm charts to target environments.

Test Automation

- TestContainers – Spin up dependency containers for isolated testing.

- Playwright – Modern end‑to‑end testing framework with built‑in parallel execution.

- Jest + Vitest – Fast JavaScript unit testing with sandboxing.

Monitoring & Observability

- Prometheus + Grafana – Aggregate metrics across blue and green clusters.

- Jaeger – Distributed tracing to spot latency regressions.

- ELK Stack – Centralized logging for test artifacts.

Common Pitfalls and How to Avoid Them

Even with a well‑architected pipeline, teams can encounter issues. Below are the most frequent mistakes and strategies to mitigate them.

1. Over‑Paralleling Without Resource Management

Running too many test shards concurrently can exhaust cluster resources, leading to flaky tests. Use quota limits and resource quotas per namespace to prevent runaway consumption.

2. Skipping Data Seeding

Regression tests that assume pre‑existing data will fail when deployed to a fresh environment. Incorporate automated data seeding scripts as part of the blue deployment.

3. Ignoring Chaos Engineering

Health checks may pass under normal conditions but fail during failure scenarios. Integrate chaos tests (e.g., Gremlin, Chaos Mesh) into the regression suite to validate resilience.

4. Not Cleaning Up Orphaned Resources

After a failed blue deployment, stale namespaces can accumulate. Implement automated cleanup jobs that delete orphaned resources after a certain TTL.

5. Relying Solely on Syntactic Health Checks

Health endpoints that only verify HTTP 200 responses can miss deeper functional regressions. Combine syntactic checks with semantic assertions that validate payload structure and business logic.

Case Study: E‑Commerce Platform in 2026

Take ShopSphere, a global e‑commerce platform serving 10 million users daily. They adopted Zero‑Downtime Blue‑Green Deployments with Parallel Regression Tests in 2025. The key outcomes included:

- Reduced release cycle time from 48 hours to 12 hours.

- Zero customer‑reported incidents during rollouts.

- 90% decrease in mean time to recovery (MTTR) due to instant rollback capability.

ShopSphere’s pipeline leverages GitHub Actions for CI, Argo CD for CD, and TestContainers for data isolation. Their health checks include an /api/healthz endpoint that verifies inventory availability, payment gateway connectivity, and recommendation engine response times.

Future Trends: AI‑Assisted Deployment Validation

Looking ahead, AI models are beginning to predict deployment outcomes based on historical data. In 2026, teams can use machine learning to assess risk scores for each new commit, dynamically adjusting the level of parallel testing or the aggressiveness of traffic shifting. This approach further minimizes human oversight and accelerates safe deployments.

Conclusion

Zero‑Downtime Blue‑Green Deployments powered by Parallel Regression Tests and rigorous health‑check assertions provide a robust framework for delivering new features without user impact. By carefully designing sharded test suites, selecting the right tooling, and anticipating common pitfalls, organizations can achieve predictable, risk‑free rollouts that scale with growth. Embracing these practices positions teams to meet the demanding expectations of 2026’s continuous delivery landscape.