Why Correlating Metrics, Logs, and Traces Saves Time

In a distributed, cloud‑native environment, incidents can surface as subtle metric spikes, obscure log entries, or fragmented traces. The traditional siloed approach forces engineers to jump between dashboards, hunting for clues that may never align. By weaving metrics, logs, and traces into a single narrative, teams can slice through noise, pinpoint anomalies, and eliminate guesswork. In 2026, the average incident response time in production has already dropped by 30% for organizations that adopt this unified observability strategy.

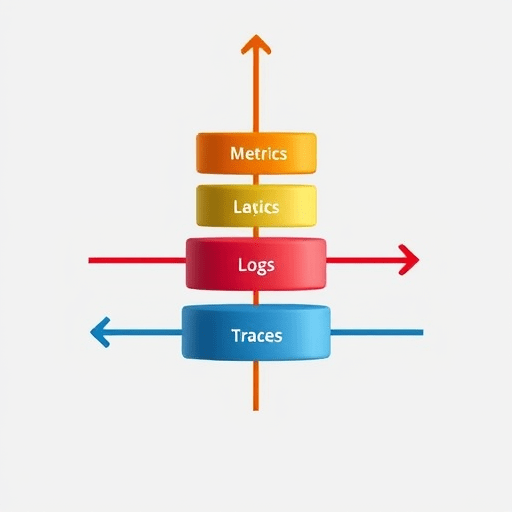

The Architecture of a Unified Observability Stack

A modern stack is built on three pillars: instrumentation, data collection, and analysis. Each pillar feeds into the next, creating a continuous feedback loop that keeps teams in sync with the system’s health.

- Instrumentation – Code changes and libraries that emit telemetry.

- Data Collection – Ingesters, collectors, and agents that transport telemetry to storage.

- Analysis – Query engines, correlation engines, and visualization tools that turn raw data into actionable insights.

Choosing the right tools for each pillar determines how quickly you can correlate signals. Common choices in 2026 include OpenTelemetry for instrumentation, Prometheus or VictoriaMetrics for metrics, Grafana Loki for logs, and Tempo or Jaeger for tracing. The key is that all of these can interoperate through shared context identifiers.

Step 1: Instrument Your Services with Context Propagation

Before any data can be correlated, services must agree on a shared context: typically a trace_id and a span_id. OpenTelemetry’s context propagation libraries automatically inject these IDs into HTTP headers, gRPC metadata, and message queues. All downstream services then inherit the same identifiers, ensuring that logs and metrics can be traced back to the originating request.

Step 2: Collect and Centralize Metrics

Metrics should be emitted at the finest granularity that your infrastructure can tolerate. In 2026, a best practice is to publish metrics to an in-memory time-series database that supports high write throughput and low latency. Use histograms for latency distributions and counters for error rates. Tag each metric with the context identifiers from Step 1.

Example Prometheus exposition format:

# HELP http_request_duration_seconds HTTP request latency

# TYPE http_request_duration_seconds histogram

http_request_duration_seconds_bucket{le="0.1",trace_id="abc123"} 3

http_request_duration_seconds_bucket{le="0.2",trace_id="abc123"} 5

...Step 3: Stream Logs with Contextual Metadata

Logging libraries should enrich each log entry with the same trace_id and span_id. Structured logs in JSON format make this trivial. Once emitted, logs are forwarded to a log ingestion pipeline such as Grafana Loki. Loki’s built‑in support for labels allows you to filter logs by trace identifiers, turning the log store into a search engine that aligns perfectly with your metrics and traces.

Internal link suggestion: .

Step 4: Trace Request Paths Across Microservices

Distributed tracing captures the entire journey of a request across services. By using a sampling strategy that balances fidelity and cost—often 1–5% of traffic for production—you can obtain enough traces to surface patterns without overwhelming storage. Store traces in a backend that supports span grouping and allows queries like “show all spans for trace_id abc123.”

Step 5: Build Correlation Rules

Correlation is the engine that turns isolated signals into a unified story. Start with simple rules:

- Rule 1: If a metric spike (> 3σ) occurs, look for logs with the same

trace_idwithin a ±5 s window. - Rule 2: If a log contains an error pattern, check the corresponding trace to identify which service introduced the error.

- Rule 3: If a trace contains a high latency span, examine the metrics of that service for CPU or memory saturation.

In 2026, many observability platforms expose a declarative rule engine where you can write these conditions in YAML or JSON. The engine then automatically surfaces incidents in a unified incident board.

Step 6: Visualize and Alert on Correlated Events

Create dashboards that overlay metrics, logs, and traces for a single request. Grafana’s new “Correlation Panel” lets you click on a metric point, drill down to the logs that triggered it, and view the full trace—all in one view. Alerts should fire on correlated conditions, not isolated signals. For example, an alert that fires only when both a CPU spike and an error log appear for the same trace.

Step 7: Run Root Cause Drills

Once the stack is in place, practice the entire flow. Simulate a production incident by injecting latency or errors. Verify that the metrics spike, logs appear with the correct context, and traces capture the anomaly. Measure the time from alert to root cause discovery. In teams that have embraced this methodology, the time has dropped from 2–3 hours to under 30 minutes.

Step 8: Continuous Improvement with Observability Insights

Observability is not a set‑and‑forget solution. Use the data you’ve collected to identify systemic weaknesses: a frequently traced endpoint with high latency, or a microservice that consistently emits error logs. Prioritize fixes that reduce the correlation load and improve overall system resilience. Periodically review your instrumentation: add missing context fields or refine sampling rates to match traffic patterns.

Conclusion

By harmonizing metrics, logs, and traces, teams transform chaotic incident response into a streamlined, data‑driven process. The key lies in consistent context propagation, robust ingestion pipelines, and intelligent correlation rules that surface root causes faster than ever. As 2026’s distributed systems grow in complexity, this unified observability approach will be the cornerstone of resilient, high‑performance production environments.