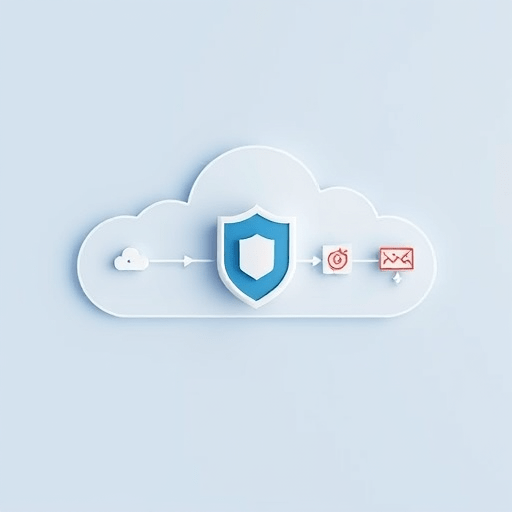

As cloud analytics become the backbone of AI development, safeguarding sensitive data while extracting value is a paramount challenge. The emerging practice of embedding differential privacy into cloud analytics pipelines offers a principled, auditable solution that balances privacy guarantees with analytic utility. This article walks through the core concepts, design patterns, and practical steps that 2026 enterprises can adopt to protect training data without compromising the intelligence of their models.

Why Differential Privacy Matters for Cloud AI Workflows

Modern AI pipelines ingest data from a multitude of sources—consumer logs, sensor feeds, medical records—often aggregated in the cloud for scalable processing. In this environment, privacy breaches can occur at several stages: raw ingestion, intermediate transformations, or model deployment. Regulations such as GDPR, CCPA, and upcoming EU AI Act impose strict liability on data controllers. Differential privacy (DP) provides a mathematically rigorous framework that quantifies how much any single individual’s data can influence the output, thereby offering a clear, enforceable compliance metric.

Fundamental DP Concepts for Cloud Pipelines

Privacy Budget (ε, δ)

DP introduces a privacy budget—parameters ε (epsilon) and δ (delta)—that limits the total privacy loss across all queries or transformations. In cloud pipelines, each statistical operation (e.g., histogram, count, mean) consumes a portion of this budget. The cumulative budget must stay within organizational thresholds to maintain acceptable privacy guarantees.

Noise Injection Techniques

Adding calibrated noise is the core of DP. Common mechanisms include Laplace, Gaussian, and Exponential noise. Selecting the appropriate mechanism depends on the query type and the desired utility trade-off. Cloud-native services can now integrate noise generation as first-class functions, reducing the implementation burden.

Composition Theorems

When multiple DP operations are chained—common in iterative training loops—composition theorems help compute the aggregate privacy loss. Advanced composition and moments accountant methods provide tighter bounds, enabling deeper pipelines without exhausting the privacy budget.

Designing a DP-Enabled Cloud Analytics Pipeline

1. Data Ingestion Layer

- Schema Validation & Anonymization: Apply DP-aware validation to strip or obfuscate sensitive fields before storage.

- Secure Transfer: Use end-to-end encryption (TLS 1.3) and authenticated APIs to prevent interception.

2. Transformation & Feature Engineering

- DP-Ready Transformations: Replace direct aggregations with DP equivalents. For example, use a DP mean estimator that adds Gaussian noise scaled to the dataset’s sensitivity.

- Automated Sensitivity Analysis: Cloud services can auto-compute sensitivity bounds based on data schemas, reducing manual errors.

3. Model Training & Evaluation

- DP-SGD (Stochastic Gradient Descent): Implement DP-SGD to clip gradients and add Gaussian noise per minibatch, ensuring each update respects the privacy budget.

- Privacy Accounting: Employ moments accountant APIs to track ε after each epoch.

4. Model Serving & Monitoring

- Inference Privacy: Apply local DP to response outputs if the model serves personal data.

- Audit Logging: Store cryptographic proofs of DP compliance (e.g., epsilon values) for regulatory audits.

5. Continuous Privacy Management

- Budget Replenishment: Automate periodic reassessment of privacy budgets based on data lifecycle events.

- Alerting: Trigger alerts when projected privacy loss exceeds thresholds.

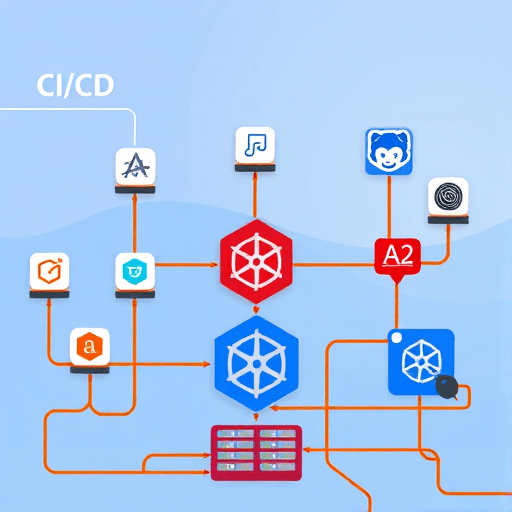

Integrating these layers requires orchestration tools—such as Kubernetes operators or serverless workflows—that can embed DP logic as middleware components. By treating DP as a pipeline attribute rather than a one-off guardrail, organizations achieve consistent privacy enforcement across all stages.

Case Study: Healthcare Data Analytics in 2026

A regional health system deployed an AI-driven diagnostic tool powered by patient records stored in a cloud data lake. The pipeline integrated DP at every transformation step: demographics were obfuscated with a Laplace mechanism, lab results were aggregated using DP-mean estimators, and the training loop employed DP-SGD with a target ε of 0.5 per epoch. The result was a model that achieved 92% diagnostic accuracy while guaranteeing that no individual’s data could be inferred from the model or intermediate outputs.

Moreover, the system maintained a privacy budget ledger that automatically adjusted for new data ingestion, ensuring ongoing compliance with both GDPR and the forthcoming EU AI Act. The cloud platform’s native DP library allowed developers to write declarative privacy policies in YAML, reducing code complexity and audit effort.

Key Considerations for 2026 Enterprises

- Data Quality vs. Privacy Trade-off: Stricter ε leads to more noise; balance is essential to preserve model performance.

- Regulatory Landscape: Anticipate evolving standards (e.g., AI Act’s “high-risk” classification) that may mandate DP as a default.

- Talent & Tooling: Invest in data scientists trained in DP and in cloud services that offer built-in DP primitives.

- Cross-Organizational Data Sharing: Federated learning combined with DP can enable multi-hospital collaborations without exposing raw data.

Future Trends: 2026 and Beyond

- Zero-Trust Data Pipelines: Integration of DP with zero-trust architectures will make it possible to enforce privacy even when data traverses untrusted networks.

- Hardware-Accelerated DP: Dedicated DP accelerators in GPUs and TPUs will reduce the performance overhead of noise injection.

- Composable Privacy Policies: Declarative policy languages will allow organizations to specify privacy constraints that automatically translate to DP operations across heterogeneous cloud services.

- Audit-Ready Proofs: Advances in zero-knowledge proofs will enable auditors to verify DP compliance without revealing the underlying data.

Conclusion

Embedding differential privacy into cloud analytics pipelines is no longer an optional enhancement; it has become a foundational requirement for AI projects that rely on sensitive data. By treating DP as a first-class citizen in pipeline design—through thoughtful budgeting, noise injection, and continuous monitoring—organizations can deliver powerful insights while meeting regulatory obligations and preserving user trust. The 2026 landscape offers mature tooling, declarative policy frameworks, and hardware acceleration that collectively make the transition to privacy-preserving analytics both practical and sustainable.