When launching an AI‑powered diagnostic mobile app in the European Economic Area, developers face two parallel streams of compliance: the European Medicines Agency’s (EMA) regulatory framework for Software as a Medical Device (SaMD) and the ISO 13485 standard’s rigorous risk‑management requirements. The EMA checklist for AI diagnostic apps merges these streams into a single, actionable roadmap that ensures both regulatory approval and long‑term product safety. This guide walks you through each EMA submission step while mapping it to the corresponding ISO 13485 risk‑management activities, so you can build a cohesive dossier and reduce time‑to‑market.

1. Understand EMA’s Digital Health Priority List and Market Access

1.1 Regulatory Pathways for Software as a Medical Device (SaMD)

EMA classifies SaMD according to the risk level of the intended medical use. AI diagnostic apps typically fall under Class I (non‑sterile) or Class IIa (moderate risk) unless the algorithm directly influences critical treatment decisions, in which case they may move into Class IIb or III. Identifying the correct class at the outset determines the required conformity assessment route—self‑declaration for Class I, notified body evaluation for Classes IIa, IIb, and III, or the newly proposed “AI‑specific” pathway EMA is piloting in 2026.

Key regulatory milestones:

- Pre‑market notification (PMN) or marketing authorization application (MAA)

- Clinical evidence submission

- Post‑market surveillance plan

- Periodic safety update reports (PSURs) for Class IIb/III devices

2. Aligning with ISO 13485: Risk Management Lifecycle

2.1 Defining the Context and Intended Use

ISO 13485 requires a clear context of use definition. For AI diagnostics, document the clinical workflow, target patient population, and the specific decision points the algorithm informs. This context feeds directly into the EMA’s intended use statement in the technical documentation.

2.2 Risk Analysis and Classification

Conduct a structured risk analysis that evaluates algorithmic bias, data quality, model drift, and potential misdiagnoses. Use the ISO risk classification matrix to categorize each hazard and assign mitigation measures. For example, a false‑negative result in cancer screening should trigger an “unacceptable risk” designation, demanding rigorous performance validation and a robust post‑market monitoring plan.

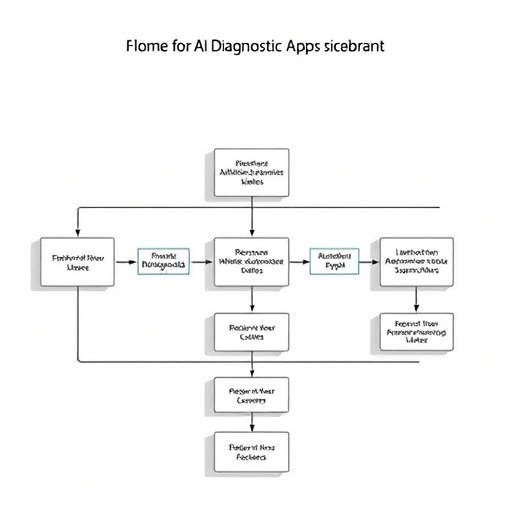

3. The EMA Checklist: Detailed Step‑by‑Step Process

3.1 Pre‑Submission Preparation

Gather all QMS documentation, including the ISO 13485 declaration of conformity, and ensure your software development life cycle (SDLC) follows IEC 62304 guidelines. Prepare a concise clinical evaluation plan that outlines data sources, inclusion/exclusion criteria, and statistical methods.

3.2 Clinical Evaluation and Evidence

EMA expects a thorough clinical evaluation that demonstrates the algorithm’s safety and performance. This includes:

- External validation studies (retrospective and prospective cohorts)

- Reference standard comparisons

- Human‑machine interaction studies (usability and training)

- Ethics and data governance documentation

3.3 Technical Documentation (QMS, risk files, AI model documentation)

The technical file must contain:

- Design history file (DHF) that tracks all design decisions and model iterations

- Risk management file aligned with ISO 13485, showing mitigations and residual risks

- Algorithm documentation: architecture, training data provenance, performance metrics, explainability methods

- Software validation reports (unit, integration, system, performance)

- Device description and labeling, including risk warnings and instructions for use (IFU)

3.4 Post‑Market Surveillance (PMS) and Vigilance

EMA requires a PMS plan that monitors device performance in real world use, collects adverse event data, and feeds back into the risk management file. For AI apps, this includes continuous learning systems (CLS) monitoring for model drift and re‑validation triggers. Submit PSURs every 12 months for Class IIb/III devices.

4. Harmonizing Documentation Across ISO 13485 and EMA

4.1 Risk Management File Integration

Embed the ISO 13485 risk file within the EMA technical dossier. Use a unified risk register that links each risk entry to its regulatory justification, mitigation action, and monitoring plan. This ensures reviewers can trace decisions from risk identification to post‑market reporting.

4.2 Software Development Life Cycle (SDLC) and Quality Management

Map each SDLC phase—requirements, design, implementation, verification, validation, release—to ISO 13485 quality procedures. Demonstrate that software design reviews, code audits, and change control processes comply with ISO standards and support the EMA’s audit trail expectations.

5. Common Pitfalls and How to Avoid Them

5.1 Over‑reliance on Proprietary AI Models

Using black‑box models without sufficient explainability can trigger regulatory concerns. Provide transparency through model interpretability tools, explainable AI (XAI) frameworks, and clear documentation of how decisions are derived.

5.2 Inadequate Labeling and Post‑Market Support

Failing to update IFU or to monitor adverse events can lead to recalls. Establish a version control system for labeling, and integrate a robust PMS workflow that triggers alerts when performance metrics fall below predefined thresholds.

6. Future Outlook: EMA 2026 Updates and AI Governance

6.1 AI Ethics, Explainability, and Transparency

EMA’s 2026 guidance will emphasize ethical principles such as fairness, accountability, and transparency. Developers should proactively embed fairness audits, bias mitigation, and explainable AI modules into their product lifecycle.

6.2 EMA’s Proposed AI‑Specific Guidance

EMA is piloting a dedicated AI pathway that will require evidence of continuous learning governance, model versioning, and a formal audit of data sources. Early engagement with EMA’s AI advisory group can help anticipate changes and tailor documentation accordingly.

By integrating ISO 13485 risk management with EMA’s regulatory steps, developers can create a coherent submission that meets both quality and safety expectations. This checklist serves as a living document; update it as you iterate your AI model and as EMA’s guidance evolves.