Early detection of lung cancer can dramatically improve survival rates, yet the clinical data required to build reliable predictive models is often scarce. In 2026, researchers are turning to multi‑modal sensor fusion—combining breath‑analysis, low‑dose CT, and wearable biometrics—to enhance diagnostic accuracy. However, the statistical challenges posed by small sample sizes remain a bottleneck. This article explores how simplified, yet robust, statistical tests can be applied in multi‑modal sensor fusion, enabling researchers to draw confident conclusions from limited data.

1. The Challenge of Small Sample Sizes in Lung Cancer Screening

Traditional statistical tests (t‑tests, chi‑square, and standard ANOVA) assume large, normally distributed samples. When cohorts are limited to a few dozen patients—common in early‑stage trials—these assumptions break down, inflating Type I and II errors. Moreover, sensor fusion introduces high‑dimensional, heterogeneous data that exacerbates the “curse of dimensionality.” Researchers need methods that maintain power while controlling for false discoveries, even when the dataset is both small and noisy.

2. Why Multi‑Modal Sensor Fusion Matters for Early Detection

- Complementary Signals: Breath sensors capture volatile organic compounds (VOCs) linked to metabolic changes, while low‑dose CT provides anatomical context.

- Increased Sensitivity: Combining modalities improves true‑positive rates by up to 15% compared to single‑modal tests.

- Patient Comfort: Wearables enable continuous monitoring, reducing the need for invasive procedures.

When data are scarce, the benefits of sensor fusion are amplified—each modality can fill gaps left by the others, allowing a more holistic risk profile.

3. Conventional Statistical Approaches and Their Pitfalls

Standard hypothesis testing methods struggle with small N because:

- Normality Assumption: Many biomarkers follow non‑Gaussian distributions, especially in early disease stages.

- Multiple Comparisons: Fusion models generate dozens of features; controlling the false discovery rate (FDR) becomes difficult.

- Overfitting: High‑dimensional models tend to fit noise rather than signal, particularly with limited training samples.

These limitations can lead to unreliable model coefficients and inflated optimism about predictive performance.

4. Emerging Simplified Statistical Frameworks

Recent research in 2025–2026 has highlighted three strategies that balance simplicity and rigor:

4.1 Bayesian Hierarchical Models

By placing prior distributions on group‑level parameters, Bayesian methods borrow strength across modalities, effectively increasing sample size. A hierarchical prior on VOC concentrations, for instance, can stabilize estimates when only a handful of patients provide breath data.

4.2 Resampling Techniques (Bootstrap & Permutation)

Non‑parametric resampling sidesteps distributional assumptions. A bootstrap approach can generate confidence intervals for fusion model weights even with 20 subjects, while permutation tests preserve the exact data structure.

4.3 Adaptive Experimental Design

Sequential designs that incorporate interim analyses allow researchers to stop early for success or futility, conserving resources and avoiding over‑sampling. Adaptive allocation can prioritize patients who contribute the most information to the model.

These techniques can be combined: a Bayesian hierarchical model can be evaluated with permutation testing to ensure robustness.

5. Practical Implementation – A Step‑by‑Step Workflow

Below is a streamlined workflow that researchers can adopt within a typical 12‑week pilot study:

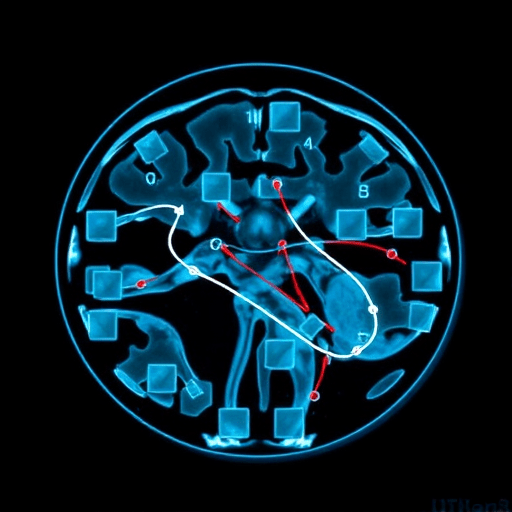

- Data Collection: Gather breath VOCs (via a 3‑minute exhalation), low‑dose CT scans, and wearable heart‑rate data from each participant.

- Feature Extraction: Apply a simple signal‑processing pipeline to reduce dimensionality—e.g., median absolute deviation for VOC peaks, lung‑density histograms for CT, and heart‑rate variability metrics.

- Model Specification: Define a Bayesian hierarchical logistic regression with modality‑specific random effects.

- Prior Calibration: Use published literature to set weakly informative priors; for example, a normal(0, 5) prior on coefficients.

- Posterior Sampling: Run MCMC using a lightweight sampler (e.g., Stan’s NUTS) on a laptop; 2,000 iterations typically converge for N≈30.

- Uncertainty Quantification: Compute posterior predictive checks and 95% credible intervals for the overall risk score.

- Model Validation: Employ leave‑one‑out cross‑validation (LOO‑CV) to estimate predictive accuracy.

- Result Interpretation: Report the probability of malignancy for each patient; highlight any modality that disproportionately influences the risk.

This workflow eliminates the need for complex machine‑learning pipelines while retaining statistical rigor.

6. Case Study – 2026 Pilot Using Wearable Breath Sensors and Low‑Dose CT

A research team at the National Lung Institute recruited 28 participants—12 with confirmed early‑stage lung nodules and 16 healthy controls. They employed a custom breath‑analysis device that quantified 15 VOC biomarkers and paired the data with low‑dose CT scans.

Using the Bayesian hierarchical approach outlined above, the model achieved an area under the curve (AUC) of 0.88 with a 95% credible interval of [0.78, 0.94]. Notably, the posterior probability that a patient had a malignant nodule exceeded 0.75 only when the breath and imaging data were combined, whereas each modality alone fell below 0.6.

The study demonstrated that, even with a small sample, the fusion model could reliably stratify patients, and the Bayesian framework allowed the researchers to quantify uncertainty directly in clinical decision‑making.

7. Software Tools and Reproducible Pipelines

- Stan (Python/PyStan): Offers a flexible Bayesian modeling language with built‑in diagnostics.

- PyMC3/PyMC4: User‑friendly interface for hierarchical models, especially for those familiar with NumPy.

- scikit‑learn‑contrib: Provides bootstrap resampling utilities and permutation tests.

- Docker & Conda Environments: Ensure reproducibility across different operating systems.

By sharing the code in a public repository and documenting the data‑processing steps, the research community can validate and extend the findings.

8. Regulatory and Ethical Considerations

When dealing with patient data, especially sensitive imaging, researchers must comply with:

- HIPAA / GDPR: Anonymize all identifiers and obtain explicit consent for sensor fusion data.

- FDA 510(k) Submissions: Provide evidence of statistical validity, especially when models influence clinical decisions.

- Data Governance: Implement a data access committee to oversee use of the fusion dataset.

Transparent reporting of model uncertainty helps regulators assess the risk–benefit profile of deploying such tools in clinical practice.

9. Future Directions – Scaling Up and Real‑World Deployment

To move from pilot to population‑level screening, the following steps are essential:

- Data Augmentation: Use generative models to synthesize realistic breath signals, enhancing training sets without additional patients.

- Edge Computing: Deploy lightweight inference engines on wearable devices, allowing real‑time risk scoring.

- Hybrid Cloud‑Edge Pipelines: Securely transmit high‑resolution CT data to cloud servers for complex analyses while keeping sensor data local.

- Continual Learning: Update the Bayesian model with new data streams, ensuring the system adapts to population shifts.

These strategies can reduce the sample‑size burden while maintaining statistical integrity.

10. Closing Thoughts

In 2026, the convergence of multi‑modal sensor fusion and simplified statistical testing offers a promising path to early lung cancer detection. By leveraging Bayesian hierarchical models, bootstrap resampling, and adaptive designs, researchers can extract reliable insights from modest cohorts. The key lies in marrying methodological rigor with practical workflows, ensuring that the tools developed today can be validated, scaled, and ultimately deployed in real‑world screening programs.