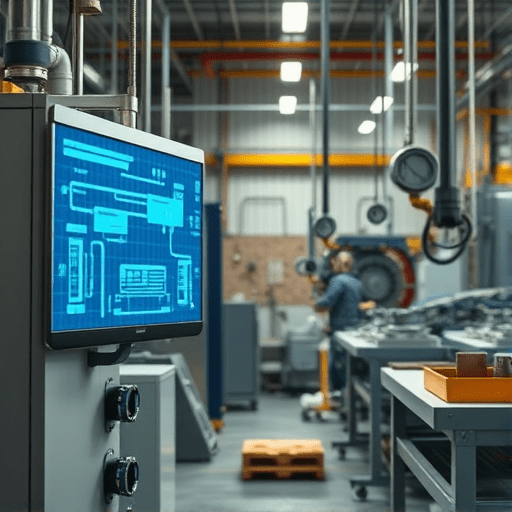

In 2026, small factories are turning to Digital Twin Integration for Predictive Maintenance in Small Factories to stay competitive. By creating real-time virtual replicas of machines, plant managers can detect anomalies before they turn into costly failures, schedule maintenance proactively, and maximize equipment uptime. This guide walks you through the essential stages—from sensor selection to cloud modeling—so you can launch a low‑cost, high‑impact digital twin program that fits your production floor.

1. Assess Your Current Equipment and Define Goals

Identify Critical Assets

Start by cataloguing all machinery. Highlight the assets that most frequently cause bottlenecks, those with high replacement costs, or those that have safety implications. For each, record operating hours, typical fault history, and the cost of unscheduled downtime.

Map Out Current Maintenance Practices

Document the existing maintenance schedule: time‑based intervals, manual checklists, and any existing sensor data. Identify gaps where predictive insight would be most valuable. Set clear, measurable objectives—e.g., reduce mean time to repair (MTTR) by 30 % or increase mean time between failures (MTBF) by 20 % over the next year.

2. Select the Right Sensor Suite: Low‑Cost, High‑Value Data

Types of Sensors: Vibration, Temperature, Acoustic, Power

Vibration sensors reveal bearing wear; temperature sensors flag overheating; acoustic sensors detect fluid leaks; power meters expose abnormal energy draw. Choose sensors that align with your failure modes. In 2026, many vendors offer wireless, low‑energy modules that can be retrofitted to legacy equipment.

Placement and Data Quality Considerations

Mount sensors where they capture the most representative data. Use accelerometers on rotating shafts and thermocouples near heat sources. Validate data by cross‑checking sensor readings with manual inspections for at least one production cycle. Remember that noisy data is more expensive than sparse data; invest in proper shielding and grounding.

3. Build a Scalable Edge Computing Layer

Why Edge Matters for Digital Twins

Edge devices process data locally, reducing latency and bandwidth costs. In a small factory, this means real‑time anomaly detection even when connectivity is intermittent. Edge computing also offloads the cloud, keeping the system responsive during heavy analytics loads.

Edge Hardware Options

- Raspberry Pi 4 + Industrial HAT: inexpensive, GPIO‑ready for sensor input.

- NVIDIA Jetson Nano: GPU acceleration for complex AI inference.

- Edge Gateways (e.g., Advantech IoT Gateway): industrial‑grade chassis with multiple serial ports.

Data Preprocessing and Compression

On the edge, apply basic filtering, down‑sampling, and feature extraction (e.g., RMS vibration, temperature variance). Compress data streams using LZ4 or zstd before sending to the cloud. This saves storage and improves model performance.

4. Create Your Digital Twin Model in the Cloud

Choosing a Cloud Platform

Three leading options are:

- AWS IoT TwinMaker – tight integration with AWS IoT Core, SageMaker, and QuickSight.

- Azure Digital Twins – native support for spatial modeling and Azure Monitor.

- Google Cloud – strong AI services via Vertex AI and Cloud IoT Core.

Select based on your existing cloud stack and licensing constraints.

Model Architecture: Asset, Process, and Lifecycle Components

Structure the twin into three layers:

- Asset Layer – physical components, specifications, and operational limits.

- Process Layer – workflow steps, inter‑asset dependencies, and throughput targets.

- Lifecycle Layer – maintenance history, wear models, and projected remaining useful life.

Integration with Existing MES / ERP

Expose twin data via REST APIs or message brokers so that your MES can trigger work orders, and ERP can update asset records automatically. This tight integration keeps maintenance records up to date without manual entry.

5. Implement AI‑Powered Predictive Analytics

Anomaly Detection Algorithms

Deploy lightweight models on the edge for immediate alerts:

- Isolation Forest for unsupervised outlier detection.

- One‑class SVM for specific vibration signatures.

- Kalman filters for trend prediction in temperature data.

Predictive Failure Models

In the cloud, train supervised models using historical data:

- Gradient Boosting (e.g., XGBoost) to predict time-to-failure.

- Recurrent Neural Networks (RNNs) for sequence‑based degradation patterns.

- Physics‑inspired Bayesian models that incorporate wear equations.

Continuous Learning and Model Drift Mitigation

Set up a CI/CD pipeline that retrains models monthly or after each major maintenance event. Monitor performance metrics such as precision, recall, and root mean squared error (RMSE). If drift is detected, trigger an automatic retrain or human review.

6. Design an Automation Workflow for Maintenance

Condition‑Based Alerts

When a sensor anomaly exceeds threshold, the edge device sends a message to the cloud. The twin then visualizes the anomaly on the digital dashboard, highlighting the affected asset.

Work Order Generation

Based on predictive model output, the system auto‑creates a work order in the MES, assigns a technician, and estimates repair time. Include spare part lists and safety checks in the automated ticket.

Mobile Technician App Integration

Technicians receive push notifications on their mobile devices with asset location, issue description, and recommended actions. The app can also capture photos and update status in real time, closing the loop between field and back‑office.

7. Test, Validate, and Iterate

Pilot Run on a Single Machine

Deploy the full stack—sensors, edge, cloud, AI models—on one high‑value machine. Run for 30 days, then evaluate KPIs: MTTR, MTBF, model accuracy, and technician feedback.

KPI Tracking: MTTR, MTBF, ROI

Calculate the return on investment by comparing maintenance costs before and after implementation. Track MTTR and MTBF weekly to spot improvement curves.

8. Scale Across the Facility

Deployment Playbook

Create a standardized bundle: sensor kit, edge device configuration, cloud deployment scripts, and training materials. Use Infrastructure as Code (IaC) tools like Terraform to spin up new twin instances quickly.

Change Management and Staff Training

Conduct workshops for operators and maintenance staff, focusing on interpreting twin dashboards, responding to alerts, and updating asset records. Use gamification elements—badge achievements for first successful predictive maintenance—to accelerate adoption.

9. Ensure Data Security and Compliance

Encryption, Authentication, and IAM

Encrypt data at rest and in transit using TLS 1.3 and AES‑256. Employ role‑based access control (RBAC) in the cloud, and use device authentication via X.509 certificates or AWS IoT Core’s device identity.

GDPR, ISO 27001, and Industrial Standards

Even though production data may be non‑personal, maintain audit logs, secure backup strategies, and regular penetration testing. Align with ISO 27001 to formalize your information security management system.

10. Maintain the Digital Twin Ecosystem

Firmware Updates, Sensor Calibration

Schedule quarterly firmware patches for edge devices and sensor firmware. Automate sensor calibration checks via scheduled self‑diagnostics, logging results back to the twin.

Periodic Model Retraining

Set a cadence—monthly or quarterly—to retrain predictive models with the latest data. Version models and track performance regressions to ensure continuous improvement.

By following this roadmap, small factories can deploy a digital twin ecosystem that not only anticipates equipment failure but also streamlines maintenance workflows. With real‑time insights, proactive interventions, and a scalable architecture, downtime shrinks and asset life extends, delivering tangible value in 2026 and beyond.