Generative AI Models with Differential Privacy: The New Frontier for Synthetic Healthcare Data

In today’s data‑driven medical landscape, the tension between leveraging patient information for research and preserving privacy has reached a critical point. Generative AI models with differential privacy are emerging as a breakthrough solution that allows scientists to create realistic, synthetic datasets without exposing real patient identities. This article explores how these advanced techniques are redefining data sharing, the safeguards they provide, and what the future holds for healthcare innovation.

What Is Synthetic Healthcare Data?

Synthetic healthcare data are computer‑generated records that mimic the statistical properties of real patient data—demographics, lab results, imaging findings—without containing any direct identifiers. Think of it as a “stand‑in” dataset that preserves the patterns and correlations needed for analysis but eliminates the risk of reidentifying individuals. Researchers can train machine learning models, validate algorithms, and perform epidemiological studies using these stand‑ins, all while sidestepping many of the regulatory hurdles associated with real data.

The Role of Generative AI

At the heart of synthetic data creation lies generative AI, particularly Generative Adversarial Networks (GANs) and diffusion models. These architectures learn to produce data that are statistically indistinguishable from real records. The process typically involves two neural networks: a generator that creates synthetic data and a discriminator that evaluates how realistic those data appear. Over many iterations, the generator refines its outputs to fool the discriminator, resulting in highly accurate synthetic datasets.

Why Traditional Methods Fall Short

- Manual de‑identification is laborious and prone to error, often leaving residual quasi‑identifiers.

- Noise addition techniques (e.g., Laplace or Gaussian mechanisms) can degrade data utility if applied excessively.

- Complex clinical data structures—such as longitudinal visits, multimodal imaging, and free‑text notes—are difficult to anonymize while maintaining clinical relevance.

Generative AI addresses these challenges by learning high‑dimensional relationships across multiple data modalities, producing richer and more usable synthetic data.

Understanding Differential Privacy

Differential privacy (DP) is a mathematical framework that guarantees that the inclusion or exclusion of a single individual’s data in a dataset does not significantly affect the output of a computation. In practice, DP introduces carefully calibrated noise to protect privacy while preserving overall data structure. Key concepts include:

- Privacy budget (ε): A lower ε provides stronger privacy but can reduce data utility.

- Composition theorems: They help manage cumulative privacy loss across multiple queries or models.

- Post‑processing invariance: Once noise is added, further transformations cannot reduce privacy guarantees.

When integrated with generative AI, DP can be applied during training (DP‑SGD) or to the generated samples themselves, ensuring that no single patient’s information disproportionately influences the synthetic output.

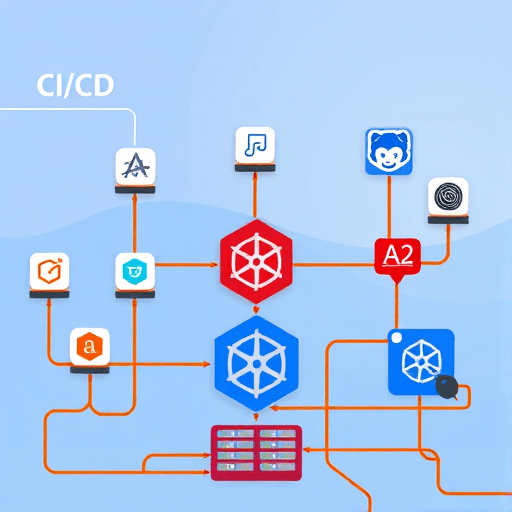

How Generative AI and Differential Privacy Work Together

The synergy between these technologies is twofold:

- Privacy‑Preserving Training: Differential privacy mechanisms are incorporated into the gradient updates during model training. This limits the amount of information the generator can glean from any single real record.

- Privacy‑Guaranteed Samples: After training, the generator can produce synthetic data that are provably DP‑protected, meaning each synthetic record is statistically independent of any particular real patient.

Practically, a DP‑GAN or DP‑diffusion model can produce hundreds of thousands of synthetic patient records that retain crucial clinical correlations—such as comorbidity patterns or medication sequences—while maintaining robust privacy guarantees.

Key Benefits for Researchers

- Rapid data sharing across institutions without complex data use agreements.

- Scalability: Generate as many synthetic records as needed for large‑scale machine learning.

- Regulatory alignment with GDPR, HIPAA, and other privacy frameworks.

- Accelerated innovation by enabling iterative testing and validation cycles.

Ethical and Legal Considerations

While synthetic data significantly reduce privacy risks, they are not a panacea. Key ethical and legal issues include:

- Residual risk of reidentification if synthetic data inadvertently encode unique patterns.

- Bias propagation—if the training data contain systemic biases, synthetic data will replicate them.

- Transparency—clear documentation of privacy budgets and data generation processes is essential for reproducibility and trust.

- Intellectual property—synthetic data may still be subject to ownership claims tied to the underlying real data.

Ethical frameworks and regulatory guidance are evolving to address these nuances, emphasizing the need for rigorous audit trails and stakeholder engagement.

Implementation Challenges

Adopting DP‑enabled generative AI in clinical research requires careful planning:

- Computational resources: Training large models with DP adds significant overhead due to per‑batch noise calibration.

- Hyperparameter tuning: Balancing ε, model capacity, and data utility is non‑trivial and often domain‑specific.

- Data heterogeneity: Real clinical datasets encompass structured, unstructured, and temporal data, demanding sophisticated multi‑modal models.

- Stakeholder buy‑in: Clinicians, data custodians, and patients must trust the synthetic outputs for them to be widely adopted.

Organizations are beginning to invest in specialized platforms—like OpenAI’s API for DP‑GANs, Google’s TensorFlow Privacy library, and Microsoft’s Differential Privacy toolkit—to streamline development.

Case Studies

Several landmark projects illustrate the power of these combined techniques:

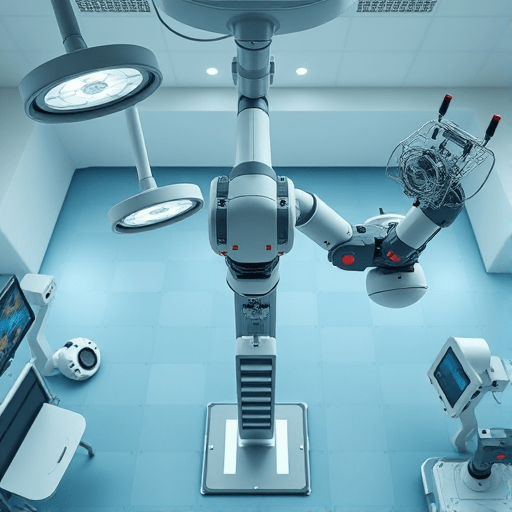

1. The Mayo Clinic DP‑Synthetic Database

Using a DP‑GAN, the Mayo Clinic released a synthetic version of its cardiac imaging dataset. Researchers validated predictive models for heart failure using the synthetic data and later confirmed performance on the real dataset, achieving comparable accuracy.

2. The UK Biobank Synthetic Release

The UK Biobank collaborated with a fintech firm to generate DP‑protected synthetic health records. The resulting dataset supported a genome‑wide association study (GWAS) that identified novel loci for type 2 diabetes without exposing individual genotypes.

3. The Cancer Genome Atlas (TCGA) Synthetic Initiative

By applying DP‑diffusion models, TCGA produced synthetic multi‑omics datasets (genomics, proteomics, imaging) that enabled cross‑institutional AI training for personalized oncology treatment plans.

These examples underscore how DP‑generative AI facilitates high‑quality research while honoring privacy obligations.

Future Directions

The convergence of generative AI and differential privacy is set to accelerate along several fronts:

- Zero‑knowledge synthetic data—integrating cryptographic techniques to further reduce trust dependencies.

- Adaptive privacy budgets—allowing dynamic adjustment of ε based on downstream usage.

- Federated synthetic data generation—enabling multiple institutions to collaborate without sharing raw data.

- Standardized evaluation metrics—establishing benchmarks for utility, privacy, and bias in synthetic datasets.

- Regulatory frameworks—anticipating legislation that explicitly recognizes synthetic data as compliant with privacy laws.

As the technology matures, we can expect synthetic healthcare data to become a mainstream resource, democratizing access to rich clinical information while safeguarding patient rights.

Conclusion

Generative AI models infused with differential privacy represent a paradigm shift in how we handle healthcare data. By producing high‑fidelity, privacy‑protected synthetic datasets, researchers can unlock insights that were previously blocked by regulatory and ethical barriers. While challenges remain—particularly around bias mitigation, resource demands, and stakeholder trust—the trajectory is unmistakably toward broader adoption and deeper integration into the research ecosystem.

Embrace this new frontier to advance medical science responsibly and ethically.

Ready to explore synthetic data solutions for your research? Contact us today to learn how.