Small solar farms often operate on tight margins, and any unplanned downtime can erode profits quickly. This quick guide explains how to deploy AI-powered predictive maintenance on a budget‑friendly Raspberry Pi using TensorFlow Lite to spot inverter anomalies before they fail, cutting downtime by up to 30%. By the end, you’ll understand the hardware setup, data pipeline, model training, and edge deployment steps that make proactive maintenance a reality for a modest solar installation.

Why Predictive Maintenance Matters for Small Solar Farms

- Inverters are the heart of a solar array; a single failure can halt all production.

- Predictive maintenance replaces reactive repairs, saving labor and spare parts.

- AI models can analyze subtle patterns in voltage, current, and temperature that human operators might miss.

- Reducing downtime by 30% translates directly into higher energy yield and better return on investment.

Hardware Blueprint: Raspberry Pi + Sensors + Inverter Interface

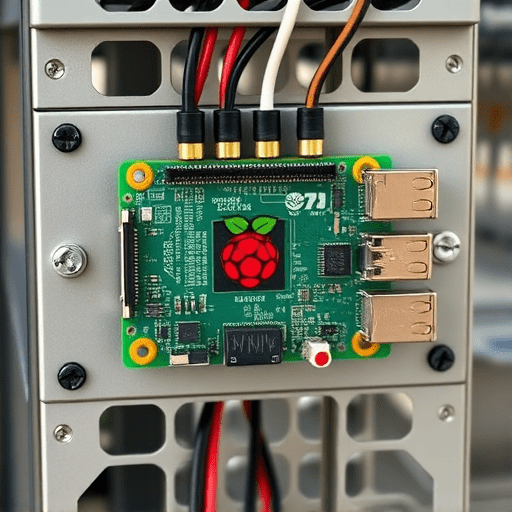

Choosing the Right Pi

For 2026, the Raspberry Pi 5 (8 GB RAM) is the most capable board for edge inference, featuring a quad‑core Cortex‑A76 and an integrated Neural Compute Unit (NCU) that accelerates TensorFlow Lite models.

Sensing the Inverter

- Current and Voltage: Use a CT sensor (e.g., SCT‑013‑000) for phase current and a voltage divider for 400 V AC. Calibrate each channel for ±0.5 % accuracy.

- Temperature: Place a digital sensor (e.g., DS18B20) near the inverter’s heat sink to track thermal drift.

- Ambient Light: A photodiode can help correlate inverter performance with irradiance.

Connecting to the Inverter

The inverter’s Modbus RTU or CAN bus interface can be accessed via a USB‑to‑serial adapter. A simple serial script on the Pi can pull real‑time metrics like output power, DC/AC voltage, and fault codes.

Data Pipeline: From Raw Signals to Clean Features

Acquisition Frequency

Sampling at 10 Hz balances resolution with storage limits. Store data in a lightweight InfluxDB instance for time‑series queries.

Preprocessing Steps

- Noise Filtering: Apply a 4th‑order Butterworth low‑pass filter with a cutoff of 1 Hz to smooth spikes.

- Feature Extraction: Compute moving averages, RMS values, and spectral power in 0.1‑Hz bands.

- Normalization: Scale each feature to zero mean and unit variance using a pre‑computed global mean/variance.

- Labeling: Use historical fault logs to tag data windows as “normal” or “anomaly.”

Model Development: TensorFlow Lite for Edge Inference

Choosing an Architecture

For anomaly detection, a lightweight 1‑D Convolutional Autoencoder (CAE) is ideal. It learns to reconstruct normal data; high reconstruction error signals anomalies.

Training Pipeline

- Split data into training (80 %), validation (10 %), and test (10 %).

- Use the

tf.keras.optimizers.Adamoptimizer, learning rate 1e-3, and train for 30 epochs with early stopping. - Convert the trained model to TensorFlow Lite with

tflite_convert --post_training_quantizeto reduce size and inference latency.

Edge Deployment on Raspberry Pi

- Install

tflite_runtimeand thetensorflow-litePython package. - Create a daemon script that:

- Reads the latest 5‑second window of sensor data.

- Runs the CAE model.

- Calculates reconstruction error.

- Triggers an HTTP POST to a monitoring dashboard if the error exceeds a threshold.

Operationalizing Anomaly Alerts

Threshold Calibration

Set the initial threshold at the 95th percentile of reconstruction error on the validation set. Adjust in real life based on false‑positive rates.

Integration with Maintenance Workflow

- Use MQTT to publish anomaly events to a central broker.

- Automate ticket creation in your existing maintenance system via REST APIs.

- Log each event with timestamp, error value, and sensor snapshot for later review.

Testing, Validation, and Continuous Improvement

Simulating Failures

Introduce known faults (e.g., shorted output, over‑temperature) in a lab environment to verify detection accuracy.

Model Retraining Schedule

Every 6 months, retrain the CAE with the latest 6 months of data to adapt to aging components and changing environmental patterns.

Performance Monitoring

Track inference latency (<5 ms per sample), CPU usage (<30 % on Pi 5), and network bandwidth (<1 MB/day). If metrics drift, consider model pruning or hardware upgrade.

Scaling Up: From Single Inverter to Multiple Units

To monitor a 100‑kW array with 10 inverters, deploy one Pi per inverter. Aggregate alerts on a cloud dashboard built with Grafana. Use Terraform to spin up the required infrastructure, keeping costs below $0.05 per hour.

Security and Reliability Considerations

- Encrypt MQTT traffic with TLS 1.3.

- Implement local fail‑safe: if the Pi disconnects, the inverter’s native monitoring takes over.

- Schedule nightly backups of sensor data to an off‑site bucket.

Future-Proofing Your AI Maintenance Stack

With the rapid evolution of edge AI, keep an eye on:

- Raspberry Pi 6 (expected Q4 2026) offering a 32‑core GPU accelerator.

- Quantization‑aware training to reduce model size further.

- Federated learning frameworks to share insights across multiple farms without compromising data privacy.

Conclusion

Implementing AI-powered predictive maintenance with a Raspberry Pi and TensorFlow Lite is a cost‑effective, scalable solution that empowers small solar farms to preempt inverter failures. By following this quick guide—setting up precise sensors, building a lightweight autoencoder, deploying it on edge hardware, and integrating alerts into your maintenance workflow—you can cut downtime by up to 30%, boost energy yield, and extend the life of critical components.