Self‑Optimizing Test Suites: How Reinforcement Learning Drives Continuous Test Evolution

Modern software delivery cycles demand testing that is both fast and thorough. Self‑optimizing test suites—where reinforcement learning (RL) continuously prunes, prioritizes, and generates tests—have emerged as a breakthrough approach to meet this challenge. By learning which test cases yield the highest signal for potential defects, RL engines can slash cycle times while maintaining or even improving coverage.

1. The RL Primer for Testing

Reinforcement learning is a type of machine learning where an agent learns to make decisions by interacting with an environment and receiving feedback in the form of rewards. In the context of testing:

- Agent: The test selection engine.

- Environment: The codebase and its execution environment.

- Action: Selecting, skipping, or generating a test case.

- Reward: Feedback based on test outcomes, coverage metrics, or fault detection.

Unlike supervised learning, RL doesn’t need labeled training data; it learns from the outcomes of its own actions over time.

2. Building the Reward Signal

Designing an effective reward function is critical. A common strategy involves a multi‑objective reward that balances three dimensions:

- Fault Detection Likelihood: Tests that discover new bugs receive higher reward.

- Coverage Contribution: Tests covering previously uncovered code paths earn bonus points.

- Execution Cost: Shorter tests are rewarded to keep cycle times low.

These components can be weighted according to project priorities. For example, in safety‑critical domains, fault detection may dominate the reward, whereas in rapid prototyping, execution speed may be prioritized.

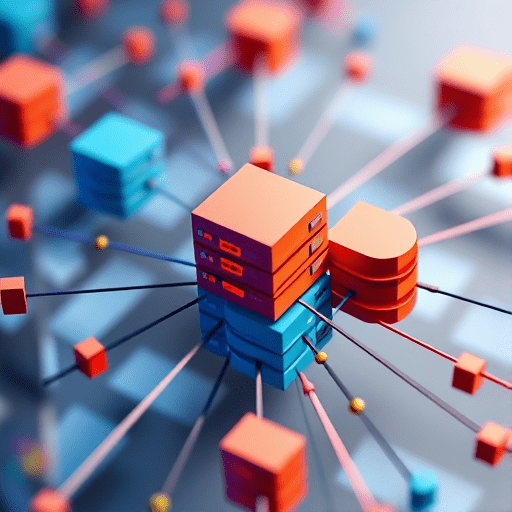

3. Architecture Overview

The self‑optimizing test framework typically comprises the following layers:

- Test Repository: Stores all existing tests and their metadata.

- Execution Engine: Runs tests, collects metrics, and feeds results back.

- RL Agent: Maintains a policy (often a neural network or tabular Q‑table) that maps test states to actions.

- Feedback Loop: Aggregates rewards, updates the policy, and decides on the next test batch.

Modern implementations may also use model‑based RL to anticipate the impact of test selection on future states, further improving efficiency.

4. Pruning with Reinforcement Learning

Traditional test suite maintenance involves manual curation or static heuristics. RL can automate pruning by learning which tests are redundant or low‑value:

- State Representation: Each test is described by features such as code coverage vector, historical pass/fail rate, and execution time.

- Action Space: The agent chooses to keep or discard each test.

- Reward Signal: Discarding a test that rarely detects faults or covers already tested paths yields a positive reward.

Over time, the agent converges on a minimal yet effective subset, dramatically reducing test execution time.

5. Prioritization on the Fly

When a build arrives, the RL agent can instantly generate a prioritized list of tests. It considers current code changes, recent failures, and historical data:

- Code‑Change Sensitivity: Tests covering modified files receive higher priority.

- Historical Failure Patterns: Tests that frequently fail in similar contexts are elevated.

- Execution Budget: The agent selects a prefix of tests that maximizes reward within the time budget.

Because the policy is continually updated, prioritization adapts to shifting development patterns without manual intervention.

6. On‑Demand Test Generation

Beyond selection, RL can suggest new tests that target uncovered code paths:

- The agent identifies code regions with low coverage.

- Using a generative model (e.g., a grammar‑based or neural network generator), it produces candidate inputs.

- Candidate tests are evaluated for novelty and reward potential before being added to the repository.

In practice, this leads to a living test suite that continuously expands to meet evolving code complexity.

7. Integration into CI/CD

Embedding a self‑optimizing test suite into a continuous integration pipeline requires careful orchestration:

- Warm‑Start: Load a pre‑trained RL policy to avoid cold starts.

- Parallel Execution: Run selected tests across distributed agents to keep cycle times low.

- Metrics Dashboard: Visualize coverage, fault detection rate, and execution time to track progress.

- Policy Refresh: Trigger policy updates after each major build or after a defined number of runs.

With these practices, teams can experience near‑real‑time test optimization without disrupting existing workflows.

8. Measuring Success

Key performance indicators (KPIs) for self‑optimizing test suites include:

| KPI | Description |

|---|---|

| Cycle Time Reduction | Percentage decrease in total test execution time. |

| Coverage Stability | Maintained or increased line/branch coverage despite pruning. |

| Fault Detection Rate | Number of unique bugs caught per run. |

| Policy Accuracy | Alignment between predicted high‑reward tests and actual outcomes. |

Studies have shown up to 40% cycle time savings with a 5% improvement in fault detection, making a strong business case for adoption.

9. Challenges & Mitigations

Implementing RL‑driven test suites is not without hurdles:

- Reward Sparsity: Rare bug discoveries can make learning slow. Mitigation: use surrogate rewards based on coverage or code churn.

- State Explosion: High‑dimensional state spaces strain policy learning. Mitigation: dimensionality reduction or feature engineering.

- Model Drift: Rapid code changes can render a policy obsolete. Mitigation: continuous retraining and adaptive learning rates.

- Interpretability: Stakeholders may distrust opaque RL decisions. Mitigation: provide explainable AI tools and visualizations of test importance.

Addressing these issues requires a combination of sound engineering practices and a commitment to iterative improvement.

10. Future Directions

Research is advancing on several fronts that will further elevate self‑optimizing test suites:

- Meta‑RL for Hyperparameter Tuning: Agents that learn how to tune their own reward weights and learning rates.

- Multi‑Objective RL: Simultaneous optimization for coverage, fault detection, and test maintenance cost.

- Cross‑Project Knowledge Transfer: Sharing policies between projects to bootstrap learning.

- Hybrid Models: Combining RL with symbolic execution or fuzzing for more robust test generation.

As these innovations mature, the vision of a self‑sustaining, continuously evolving test suite will become increasingly attainable.

Conclusion

Self‑optimizing test suites powered by reinforcement learning represent a paradigm shift in software quality assurance. By learning to prune, prioritize, and generate tests on the fly, teams can achieve faster release cycles without compromising coverage or defect detection. While implementation demands careful design and ongoing tuning, the payoff—reduced cycle times, higher coverage, and smarter test selection—is well worth the investment.

Ready to take your testing to the next level? Explore RL‑driven test automation today.